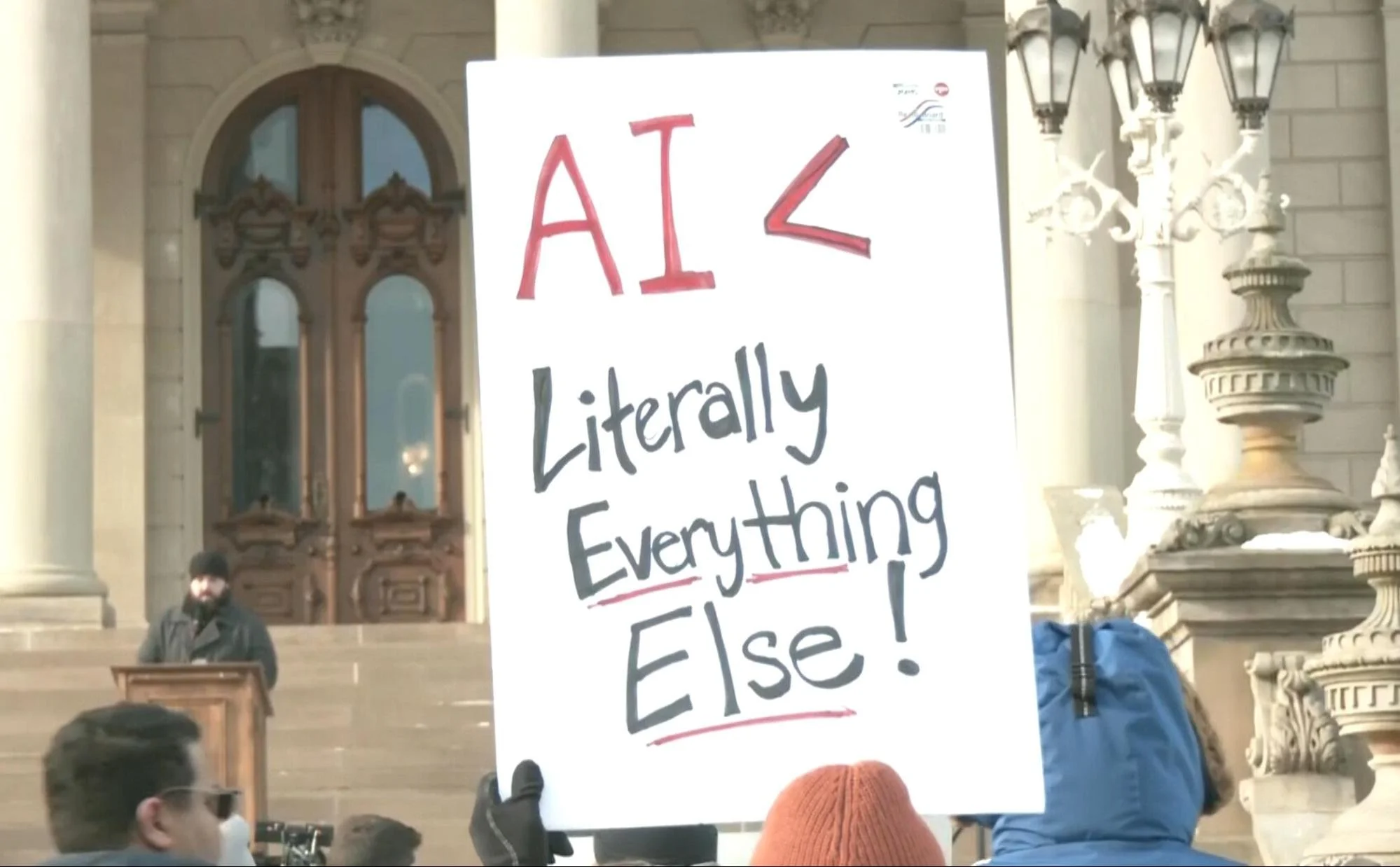

The Revolution Will Not Be AI-Generated

By Catherine Murphy

Courtesy of WZMQ

Recently, it’s become nearly impossible to scroll through social media without running into some form of AI-generated content. Whether it’s some new pop song that feels just slightly off or a funny video you know your mom will send you later, our feed is flooded with it. Even if you could avoid it on social media, it’s started to spread to all aspects of life. Students use it to finish homework, companies use it to create ads, and suddenly, data centers are moving into town. Now that it’s clear AI isn't going anywhere, people are rightly frustrated with its shortcomings, but is any of this our responsibility?

Obviously, the majority of the fault lies with the billion-dollar companies developing the technology; they’re the decision-makers after all. But while it’s far easier to blame the Sam Altmans of the world than consider that we might have a role to play as well, if we use their tech, we aren’t exactly revolutionaries either. Being an activist isn’t easy work, and if we want to label ourselves as such, we might have to decide that the cost of AI outweighs the benefit.

What first comes to mind is where these generative AI programs are getting their references. Countless artists or creators have come forward to claim their work has been plagiarized by ChatGPT. It’s beyond frustrating to put time and soul into something and find it bastardized in seconds for someone else’s entertainment. Even when it isn’t a one-for-one copy of a ripoff of someone’s specific artistic style, AI has to learn from something. Whatever slop art it churns out is an amalgamation of countless creative works it found access to, almost certainly taken without consent.

Then there is, of course, the environmental impact. The Environmental and Energy Study Institute found, as of last year, that large data centers can require up to 5 million gallons of water in a day while cooling processors or supplying power. And that’s one data center. One day. Imagine it multiplied by the ever-growing number of data centers, and it’s not difficult to see where the concern comes from. To put this in perspective, that same water is enough to supply a town of up to 50,000 people. But this isn’t just a way of comparing; it’s often a reality.

Courtesy of NYT

When a data center is built, nearby communities quickly feel the strain. Even when these tech companies claim AI data centers bring in jobs (which tend to only last in the construction phase and dry up soon after), any benefit is offset by the decreasing quality of life. The water needed has to come from somewhere. And it has to go somewhere. These centers use clean drinkable water and, as a result, are competing for what’s available to local citizens for use. And once the data centers are done, there's often an issue of runoff. Used water in cooling processors can leech heavy metals into nearby rivers or lakes, devastating wildlife and the community at large.

And as you read this, if you find yourself thinking, “I’m the exception, I need to use AI,” I understand. We all want to rationalize why we should be excused from curbing that bad behavior we just can’t quit. Whatever reason you have for believing you can’t live without it (I’ve seen every argument from AI providing moral support to helping summarize difficult readings), I want you to consider why your convenience supersedes the very real, very immediate danger it places on other disenfranchised groups.

If none of this convinces you to slow or stop your usage, think selfishly. You may think you can’t create art or finish an assignment or even text a friend without a little extra assistance, but this thinking is what’s holding you back. Maybe your finished product won’t be as polished, but it’s yours. There’s far more value in human work, as flawed as it may be. And with each attempt, you can learn. Passing your projects off to some chatbot that doesn’t give you any experience. There’s no opportunity for growth, and that possibility should scare us more than making something imperfect.

Maybe in the future we can find a way to reduce the environmental impact of AI or prevent it from training itself on stolen work from unconsenting artists, but this seems a distant future. In the meantime, our choices send a far louder message to tech leaders than our words. We can say we want better, but by continuing to use harmful generative AI, there’s no incentive for improvement. Yes, the bulk of the blame lies with corporations, but we as consumers can’t pretend we don’t have the information at hand to make a better choice.